I can’t help it. Suspect data jumps out at me.

I logged into my utility provider's website to pay my gas bill earlier. I'd forgotten how much higher those things get around here in the winter, and decided to take a closer look at projecting how much I should be expecting to pay over the next few months.

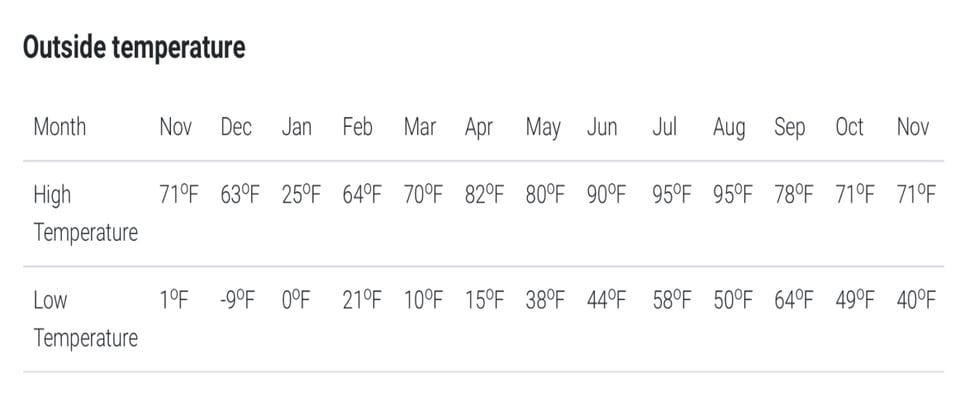

Like many utilities, my natural gas provider includes a couple of handy charts and tables on their bills and website to let me know what my usage trends look like over time. I look at these each month — they’re handy cues for knowing when it’s time for a showdown over the thermostat settings or when to start yelling at people for taking too long in the shower. Historically, I tend to pay less attention to the local temperature data they also include, but I'm paying more attention to outdoor temperature extremes heading into this winter than I've ever been before, because now I’m obsessing about the survival chances of my honeybees. So, this time, a little anomaly jumped out at me:

Whaaaat? The highest temperature in the entirety of last January was 25 degrees Fahrenheit? A full 38 degrees lower than the high for any other month? I had a visceral reaction…That can’t be right. My spidey sense data quality radar tingled. I could, of course, find out whether I was right with a simple Google query — historical weather data is, after all, pretty easy to track down. But since I’ve been having a lot of conversations lately about how to help others develop analytical thinking skills in general, and data quality management skills in particular, I thought this might be a good opportunity to take the scenic route.

The first thing I do when I set out to triage a suspected data quality issue is ask myself a few initial, grounding questions:

What kind of possible data quality problem does this represent?

How important is this, really, and what are the potential consequences of ignoring this type of problem?

What other data could I use to help me determine whether there is an issue of this sort in my dataset?

Is that other data easily/inexpensively/reliably available?

Who (besides me) might care about issues of this sort?

And, very much not least of all:

What biases have I brought and what assumptions have I made that may be setting me up to be totally wrong about this?

In my next post, I’ll dig into the motivation for these questions, work through answering them, and set about making a determination of whether or not the data, or my knee-jerk reaction, is to be trusted...this time.

— Thanks for reading! A quick programming note: that next post won’t be published here on Substack. If you’ve previously subscribed here, you can expect to receive an e-mail from me at the next place — you’ll have to opt in again if you’d like to continue to receive my newsletter. If you’ve come across this post at a later date, you can find your way to my future posts by visiting my personal site.

Same reaction here--that sure looks like an anomaly or just plain Excel contamination. Then I put on my thinking cap and asked: what if I modeled this as a naive time series? Does assuming that the current month will be identical to the immediately past month tell me anything? Well, I won't hijack your thread, but I did find an interesting result concerning autocorrelation of residuals.